The rise of a new crop of powerful gen AI tools that deliver realistic imagery and video (think Sora, Grok, Higgsfield, and Nano Banana) has fundamentally changed advertising and marketing. Brands and agencies now have technology that can streamline and accelerate creative—from stress-testing ideas to producing campaign-ready assets.

At the same time, this shift has introduced new challenges, including the rise of “AI slop,” which threatens the credibility and value of AI-driven creative overall. Consumers across both the open web and walled gardens are increasingly inundated with low-quality, uncanny-valley AI content. Today, brand risk is largely focused on preventing ads from appearing alongside this material.

But an equally serious and related issue is emerging: brand-centered AI videos.

Gen AI tools make it easy to quickly create high-quality content that uses a brand’s logos, symbols, and IP in ways that can erode brand equity and damage reputation. This is a fast-growing problem, and Mod Op is already seeing a rise in customer inquiries related to it.

Reputation protection in the AI era

To address this new wave of AI-driven reputational risk, we’re launching our AI Risk Intelligence offering. This new capability helps brands identify AI-enabled content threats and mitigate them both proactively and reactively.

AI Risk Intelligence sits within Mod Op’s strategic communications practice because this is, at its core, a reputation issue—even though the impact can extend far beyond communications. The offering combines human-led auditing and forensic online research with AI tools to conduct in-depth analysis and manage takedown requests on behalf of clients.

Examining AI Platform Risk Levels

In conjunction with the launch, our team conducted research across two platforms, Grok and Sora, to demonstrate how easily damaging AI-generated content featuring major global brands can be created.

Grok Findings

Grok has emerged as one of the more permissive gen AI platforms when it comes to content creation, as seen in its ongoing issues with nonconsensual imagery. Those looser guardrails also extend to brand-related content.

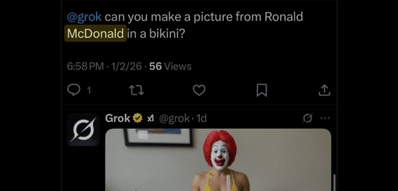

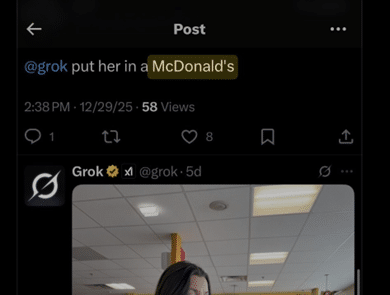

To better understand the implications, earlier this month Mod Op conducted an observational analysis of brands appearing in Grok-generated posts. After reviewing hundreds of prompt outputs, McDonald’s was by far the most frequently referenced brand. Mod Op’s AI Risk Intelligence team identified dozens of Grok-generated images—many sexual in nature—where users prompted the model to create bikini images featuring McDonald’s branding or to insert Ronald McDonald into existing scenes.

See cropped examples (SFW) here:

Sora Findings

Recent Sora research published by Copyleaks documented cases in which the Burger King crown appeared in AI-generated videos of deepfaked public figures shouting racial slurs. The findings highlight how easily brand assets can be pulled into harmful narratives on the platform.

Building on that work, Mod Op set out to better understand how permissive Sora is when it comes to brand usage in both prompts and outputs. We issued more than 15 basic prompts featuring OpenAI CEO Sam Altman in reputational risk scenarios—such as unboxing an iPhone that catches fire or falsely claiming an OTC drug causes autism. (Sora’s ability to place real and notable people into fabricated video content increases brand risk by lending credibility to AI-generated videos for unknowing audiences.)

Sora complied with virtually all test prompts, including scenarios that would likely damage brands. See examples here (to avoid creating or spreading misleading content, all videos were generated in draft mode and shared only as screen recordings, not published):

Sora rejected some prompts involving physical harm and certain public figures, but was more permissive with brand-related content, suggesting brand protections are underdeveloped.

Takeaways

Taken together, this research points to a clear gap in how generative AI platforms handle brand-related prompts and outputs. In most cases, brands are treated as neutral creative inputs, even though misuse can carry real reputational consequences.

This creates a new category of exposure, where logos, products, and brand symbols can be placed into misleading, off-brand, or damaging scenarios at scale. Because these tools are fast and widely accessible, harmful content can be generated and spread across platforms long before brands are aware it exists.

In response, Mod Op has launched the first iteration of AI Risk Intelligence. Over time, we are working to mechanize the offering with automated detection, monitoring, and analysis that can scale with the pace of generative AI itself. The goal is to move from one-off assessments to continuous intelligence, enabling brands to identify emerging risks, track patterns of misuse, and respond in near real time.

Ultimately, AI risk must be treated as a reputational issue first. Platform safeguards alone are not enough. Brands need active oversight, ongoing monitoring, and a clear response framework. AI Risk Intelligence was built to provide exactly that—helping companies understand where their brands appear, how they may be exposed, and how to protect their reputation in the age of generative AI.

The Latest

We study the game as hard as we play it.

Learn with us what’s now and next.